Shima Ghari

Society is relying more and more on computer-generated information due to the online abilities provided by current information and telecommunication technologies in a variety of ways such as social networks, learning systems, shopping, quality-of-life improvements. Multimodal Learning Analytics (MMLA) is a method in learning analytics research that makes it possible to capture large amounts of data on human activity. This study aims to provide a deeper understanding of physical movement challenges for training performers in open-ended, practice-based learning settings. Moreover, it discusses how multi-modal analytics systems can provide support for performers. This study identifies ten important requirements that a system should have in order to fulfill the performer’s needs. These requirements implemented in a low fidelity prototype that provides modeling movement (follows by Laban Movement Analysis theory), capture user data with MMLA tools, and provide personalized feedback. The results indicated there is a potential within the usage of a multi-modal system to support and improve the motor skill learning process through personalized help and feedback.

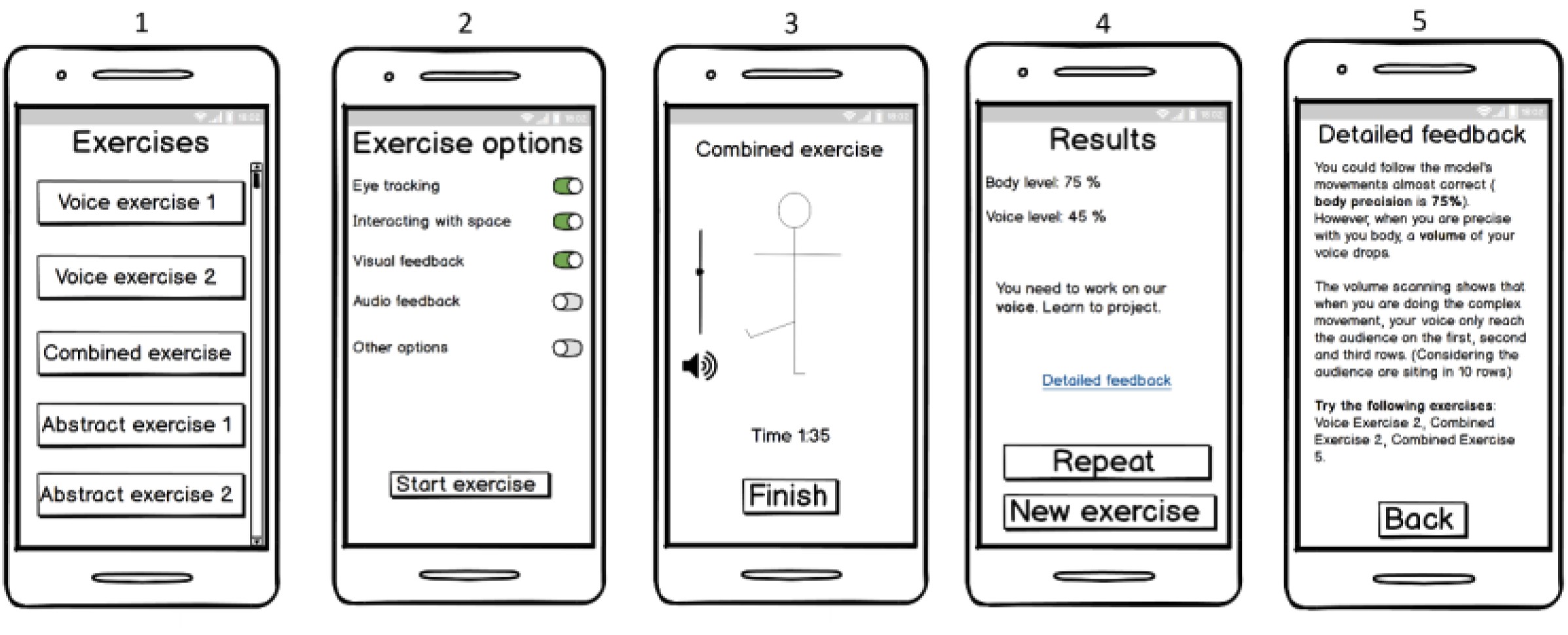

About the prototype:

The user can use a smartphone with an app that can be connected to a screen. The user can interact with the number of exercises for example “combined exercises”. In order to receive personalized feedback, the user can then choose from the list of options such as eye tracking, interacting with space, vocal perception, visual feedback, etc. After choosing the desired options a modeling movement will appear on the screen where the actor can follow the movement. By clicking on the “Finish” button, the system provides “general” and “personalized feedback”. The feedback is not designed to correct the actors but rather provide a suggestion or consideration for them.